EXALIO

EXALIO

EXALIO

Modern Data Architecture

Modern Data Architecture: Scalable, Agile, VirtualizationArchitectureEngineeringAI-Ready Foundations

We evolve your legacy data systems into a modern, agile architecture, creating a single source of truth for BI and AI.

The Modern Data Architecture Stack

Data Transformation — The Foundation

The complete lifecycle of modernization, from data maturity assessment and architecture design (Data Mesh, Lakehouse) to the engineering and migration of your data pipelines.

Data Lakehouse — The Platform

A unified platform combining a data lake’s flexibility for all data types with a data warehouse’s structure and governance, creating a single source of truth for all workloads.

Data Virtualization — The Access Layer

A logical data layer that provides unified, real-time access to all your data sources without the cost and complexity of physical data movement or replication.

AI-Ready

Data Lakehouse: The Best of Both Worlds

The Data Lakehouse is the foundation of a modern data strategy. It eliminates silos by providing a single, unified platform for all data types (structured and unstructured) and all workloads (BI, AI, and streaming), ensuring your models and tools work from the same high-quality data.

| Feature | Traditional Data Lake | Traditional Data Warehouse | Data Lakehouse ✓ |

|---|---|---|---|

| Store all data types | |||

| ACID transactions | |||

| Schema enforcement | |||

| Query performance | |||

| ML/AI support | |||

| Cost-effective storage |

Core Benefits:

Core Benefits of a Modern Data Architecture

The journey from legacy, siloed systems to modern, agile data infrastructure

Single Version of Truth

Unify data from all sources in one platform, eliminate silos and redundant copies, and ensure all teams work from the same governed data foundation.

Support for AI/ML at Scale

Provide massive scale for training data, support diverse data types (structured, semi-structured, unstructured), and enable AI/ML workloads on a modern platform foundation.

Improved Data Quality & Governance Readiness

Leverage built-in schema validation/enforcement and integrate with governance tools; strengthen trust and readiness for analytics and AI initiatives.

Agility, Automation, and Cost Efficiency

Accelerate time-to-insight with automated pipelines and DataOps practices, scale with growing volumes/variety, and reduce infrastructure and maintenance overhead.

Data Virtualization Access Layer

Real-Time Insights

Query data directly at the source—no waiting for batch ETL—so consumers always access the latest data.

Reduced Cost & Complexity

Minimize replication and storage overhead, reduce ETL development/maintenance, and provide a single point of access across sources.

Agile Data Delivery

Accelerate time-to-market for data products and rapid prototyping by assembling logical views without moving data.

Centralized Security & Governance

Apply consistent policies from a single control point with fine-grained access control and simpler compliance management.

How It Works:

How Data Virtualization Works (Query Data Where It Lives

How Data Virtualization Works (Query Data Where It Lives

Catalog Data Sources

Connect to warehouses, lakes, operational databases, and SaaS systems to establish a unified catalog.

Create the Logical Layer

Define unified views and data models that abstract physical complexity for consumers.

Query Optimization

Use intelligent routing, pushdown, and caching to optimize performance across federated sources.

Deliver to Consumers

Expose virtualized access through APIs, SQL interfaces, and BI tool connections.

Our Architecture Approach

Process timeline

Assessment

Cmprehensive audit of your current architecture, identify pain points and business requirements, and prioritize use cases for modernization

Design

Deliver a target architecture blueprint, recommend the optimal technology stack (Lakehouse platform + Virtualization tools), and create a phased migration strategy.

Build, Migrate & Optimize

Execute the plan: environment setup and configuration, data pipeline development and migration, and implementation of the virtualization and governance layers

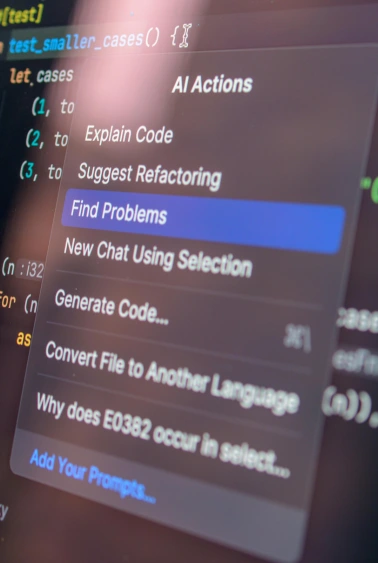

Have any question? Find answer here.

Some frequently asked questions about our AI software dashboard.

How much content I can generate?

With the power of AI, I can generate an impressive amount of content. Whether you need a short paragraph or a lengthy essay, I can provide you with text to meet your requirements. Just let me know how much content you need, and I'll be happy to assist you!

How does it generate responses?

The generation of responses is based on a combination of machine learning and natural language processing. I have been trained on a diverse range of data, including books, articles, and websites, to understand and mimic human language patterns. When you provide a prompt or ask a question, I analyze the context and use that information to generate a relevant and coherent response. Through this iterative process, I aim to provide helpful and meaningful content.

Can AI really write as well as a human?

AI has made significant progress in generating human-like text, but there are still differences between AI-generated content and human writing. While AI can produce coherent and contextually appropriate responses, it lacks the depth of human experience, creativity, and nuanced understanding. AI can be a valuable tool for generating content quickly and efficiently, but it's important to consider human input and review for tasks that require subjective or creative elements.

How do you handle my data?

As an AI language model, I don't have direct access to personal data about individuals unless it has been shared with me during our conversation. I am designed to respect user privacy and confidentiality. My primary function is to provide information and answer questions to the best of my knowledge and abilities. If you have any concerns about privacy or data security, please let me know, and I will do my best to address them.